The shapefile also includes the population and the population density for all municipalities in Ocean County. For some reason, the records of 2020 are not available yet, so what I mean by “the recent five years” is from 2015 to 2019.įurthermore, I download the shapefile of Ocean County from the New Jersey Geographic Information Network (NJGIN) and use the “geopandas” library to read it in Python, so I can visualize the data sets on a map. Besides, I also use the “JSON” library to retrieve the criminal record of each police department in the recent five years from the FBI database. This is to say that now I have the address, coordinates for all public schools, fire stations, and police stations in Ocean County. Since the distance from a home to a public school, a fire station, and a police station may be important for some customers, I use the “JSON” library from Python to retrieve those data sets from Homeland Infrastructure Foundation-Level Data (HIFLD), which provides National foundation-level geospatial data within the open public domain. I use five keywords that are allowed to use in the “features” column when I run the query multiple times, such as “single_story,” “basement,” and “1_or_more_garage,” etc. I add a for loop in my function to get as many homes with different features as possible and store them in a data frame. This time I use the “Requests” library from Python to retrieve my home data set from the RapidAPI again, and I finally get around 5,500 records in my home data set. How many clusters are in my data set? And what are the characteristics of each cluster? Collect the data Once I have my clusters, they may help me to find my ideal house more efficiently. I am planning to use the cosine similarity as well as DBSCAN algorithm from the SKLearn library in Python to cluster the houses in my new data set. To keep it local, I will be focusing on the homes located in Ocean County, NJ.

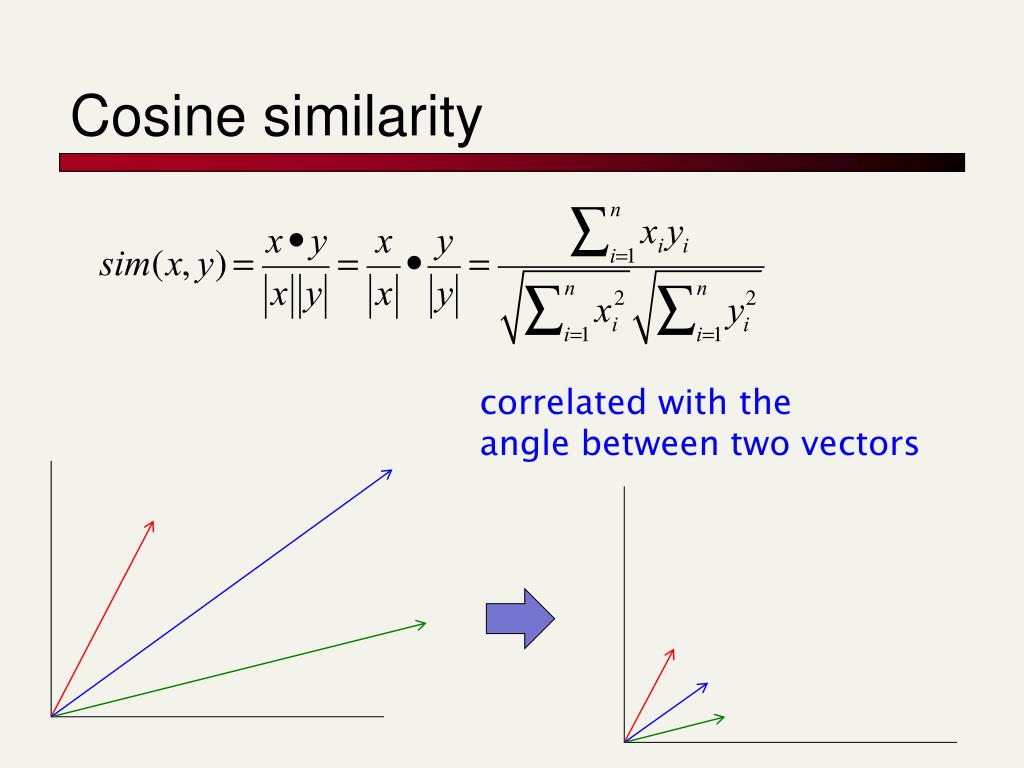

In this article, I am going to continue to explore more data on the web and conquer those limitations. The dimensions of the vectors dont correspond to word-counts, they are just some arbitrary latent concepts that admit values in -inf to inf.Although I have shown my interest to identify the cheapest home with all the features I love in my first article, I ended up encountering three limitations, only 200 homes, a lack of geospatial data of other important facilities, and a lack of a background map. It may have nudged some vectors well into the negative-values. The end result might leave you with some dimension-values being negative and some pairs having negative cosine similarity - simply because the optimization process did not care about this criterion. You run this optimization for enough iterations and at the end, you have word-embeddings with the sole criterion that similar words have closer vectors and dissimilar vectors are farther apart. Next run an optimizer that tries to nudge two similar-vectors v1 and v2 close to each other or drive two dissimilar vectors v3 and v4 further apart (as per some distance, say cosine). Its right that cosine-similarity between frequency vectors cannot be negative as word-counts cannot be negative, but with word-embeddings (such as glove) you can have negative values.Ī simplified view of Word-embedding construction is as follows: You assign each word to a random vector in R^d. Or should I just look at the absolute value of minimal angle difference from $n\pi$? Absolute value of the scores? But I really have a hard time understanding and interpreting this negative cosine similarity.įor example, if I have a pair of words giving similarity of -0.1, are they less similar than another pair whose similarity is 0.05? How about comparing similarity of -0.9 to 0.8? I know for a fact that dot product and cosine function can be positive or negative, depending on the angle between vector. I am used to the concept of cosine similarity of frequency vectors, whose values are bounded in. That explained why I saw negative cosine similarities. Apparently, the values in the word vectors were allowed to be negative. That immediately prompted me to look at the word-vector data file. However, I noticed that my similarity results showed some negative numbers. I was trying to use the GLOVE model pre-trained by Stanford NLP group ( link).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed